GAN(Generative Adversarial Networks)

paper: https://arxiv.org/pdf/1406.2661

cover: いずもねる

I’m so sad :<

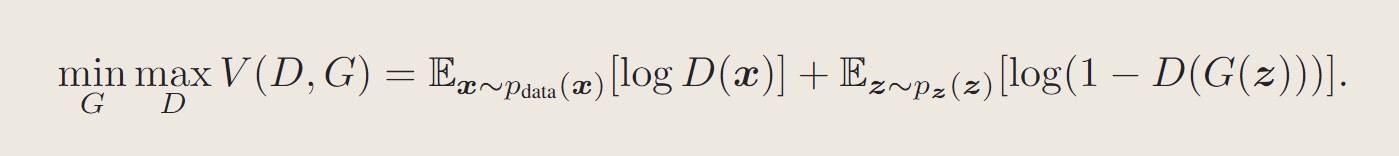

Adversarial nets

Train

In the earlier training, the objective function is to maximize

The algorithm

In the paper, the author explained the algorithm to optimize Eq1, but it is a bit deep for me. I may watch some videos explaining this later.

Experiment

Dataset: MNIST, Toronto Face Database and CIFAR-10.

The generator nets used a mixture of rectifier linear activations and sigmoid activations.

The discriminator net used maxout activations.

Dropout was applied in training the discriminator net.

Estimate the probability with Gaussian Parzen window.

- 标题: GAN(Generative Adversarial Networks)

- 作者: MelodicTechno

- 创建于 : 2024-09-05 15:07:30

- 更新于 : 2026-02-17 18:51:17

- 链接: https://melodictechno.github.io./2024/09/05/gan/

- 版权声明: 本文章采用 CC BY-NC-SA 4.0 进行许可。